3 Key Resources for Data Vault on Snowflake

By now you surely know that you can build a Data Vault on Snowflake. In fact we have many customers doing so today. So much so that we formed a Snowflake Data Vault User Group.

Over the years I have had hundreds of calls and meetings with organizations around the world discussing this topic from just basic Data Vault 101 type questions to best practices to who is doing Data Vault on Snowflake. Because of that we developed a Data Vault Resource Kit that points you to all the key blog posts, videos, and customer stories on the topic (scroll down to see everything!). Be sure to bookmark that page. Most of your questions on this topic can be answered there.

To take it a step further and to a deeper level, I partnered up with Snowflake Field CTO Dmytro Yarashneko (CDVP2) and wrote a post with reference architectures and discussions related to doing real time feeds into a Data Vault 2.0 on Snowflake. Check that out here. This article even has code!

And, at long last, for those that want to jump in feet first and try it for yourself, the team built a Data Vault Quickstart , based on the above article and a hands on lab from WWDVC 2021, that gives you a step-by-step guide and all the code to build and load a Data Vault 2.0 system, including an information mart on top of the Data Vault, all in your very own Snowflake account.

So, what is your excuse now? You have all the resources you need to give it a go!

And please, bookmark this post and/or the links above so you don’t lose them!

Model on!

Kent

The Data Warrior

Regarding pk being an MD5 hash doesn’t that mean given the super high cardinality on the pk, there wouldn’t exist an optimized way to choose how to physically cluster data (either automatically by snowflake or manually with us telling what column(s) made up cluster key on the table ?

In many cases on Snowflake, unless you have massive tables, you should not be manually clustering anyway. And in DV 2.0 folks use MD5, SHA1, SHA2 and sometimes natural keys. Lots of discussion on the approach out on the data vault alliance site too. Bottom line though – customers are not having performance issues on queries that can’t be resolved with PIT and BRIDGE tables or in many cases upsizing their virtual warehouse a little. Check this post too for more details and discussion https://www.linkedin.com/pulse/data-vault-20-snowflake-hash-question-patrick-cuba/

Best advice is to try it for your data, then evaluate all the pros and cons.

The fact that I used a diagram showing MD5 for the PK should not be taken as that being the only way, or best way, to do it in all cases. 🙂

thanks so much for the link, i was aware of the basic of data vault but interesting the PiT and Bridges snapshots to boost performance in some scenarios..

Im follower of yours, so big embrace from Europe 🌍

Emanueoi, you could watch the Snowflake webinar delivered in Oct about test and query patterns as it pertains to data vault. It expands on the article Kent referenced above. Click here: https://bit.ly/3qdpIDv

passcode is: S5sa4&ZY

Thanks Patrick for the access to your webinar above. I could neither find your presentation slides nor the code you demo’ed from your GitHub site. Could you please share the link to these resources as well? Many thanks!

You should take a look at my Agile Data Engineering book on Amazon and Dan’s Data Vault 2.0 book to learn more about PITs and Bridges. There is a lot more to DV 2.0 tha hubs, links and sats.

Thanks @patrickcuba8 for the access.

@Kent Graziano could you share exact book names or urls to be sure ? Thanks so much.

Click the images on the right side of the blog. That will get you there.

Thanks Kent for the awesome resources you shared above. I didn’t know there was a user group so I just joined the Snowflake DV User Group at https://usergroups.snowflake.com/data-vault/. Looks like I missed a few past events, however, when I click on these past events, it doesn’t provide a way for me to play the recording. Were these recorded by any chance?

Yes they were. I think the link was sent to attendees. You can email community@snowflake.com to see if they can give you the links.

Here is the recording from the first roundtable meeting I hosted. https://www.youtube.com/watch?v=Rm25db-P8YQ

Hi Kent,

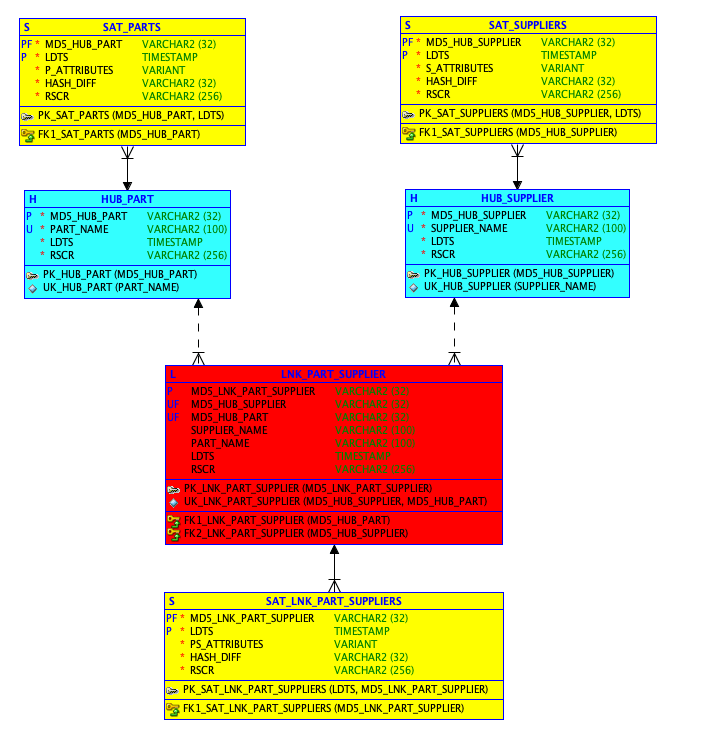

I recently read that attaching Satellite to a Link is no longer a DV 2.0 standard. Is this true ? If yes, how do we re-model the exisiting SAT – LINK relation ?

Not sure where you read that but it is totally false except in the case of a non-historized, transaction, link. Otherwise if a relationship, i.e. link, has dependant attributes then there is of course a Sat to hold those. Hopefully what you read was not written by someone with the CDVP2 certification. If it was then they were not following the standards as set by Dan Linstedt.

BTW, another standard is you never re-engineering existing models, so you would never remodel and rebuild or reload the existing objects in any case. Unless of course you really did mess it up bad. 😉