Oracle OpenWorld 2012: Day 4

It was another beautiful, sunny day in San Francisco. I started the day, again, with some morning Chi Gung, then I enjoyed the morning keynote watching the big screen in Yerba Buena Gardens. Quite a pleasant way to listen to these talks.

It was a light day session-wise for me, but it did set off a few light bulbs.

The best session of the day (and for me the whole conference), was Gwen Shapira’s (from Pythian) talk on building an integrated data warehouse with Hadoop. Gwen did a superb job of explaining what Big Data is and what it isn’t.

Her simple, and straightforward, definition:

The “cheaply” part seems to be the key. Oracle, and other databases, can handle really HUGE amounts of data. Petabytes in fact. But putting all that data into an RDBMS can cost a lot more money than having it stored in a less sophisticated file system on commodity drives (like HDFS).

So just having lots of data in your warehouse does not mean you have Big Data, you just have a Very Large Data Warehouse (VLDW).

She went on to expand the definition:

This part shed even more light on Big Data for me. This really helped clarify even more when you might be dealing with Big Data.

The talk was filled with lots of technical details, limitations, and tools ( Sqoop, Flume, Fuse-DFS) you can look at for integrating Hadoop into Oracle. Of course there are Oracle’s offerings as well, like Oracle Loader for Hadoop and Oracle Direct Connector for HDFS.

Gwen also gave use several use case examples that illustrated when to use Hadoop. Bottom line – learn to use Hadoop appropriately, not just because it is cool. With tech we can:

Make the impossible, possible. That might no make the possible easy.

If you went to OOW, find and download Gwen’s slides. And follow her on twitter (@gwenshap).

It was Big Data day for me. My other session was Ian Abramson’s session on Agile & Data. Two of my favorite topics.

Ian discussed the Agile Manifesto and Big Data and how he has been able to use agile techniques to make his projects successful.

To start, here is his simple definition on Big Data:

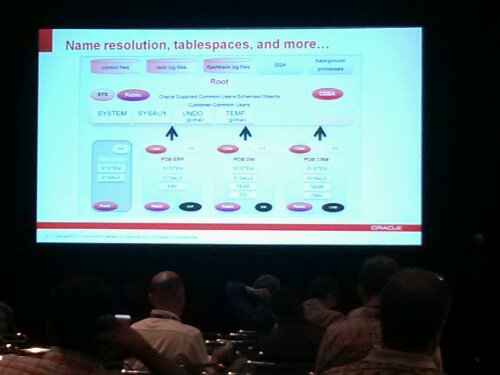

Ian had a nice picture of the overall architecture as well:

To be successful in applying agile to data projects, Ian has determined the projects must be driven by data value – that is the sprint priorities are set based on the data that can best help the customer achieve their goals. To stay on track and keep velocity, it is important to have daily touch-points with the team members as well. Ian does a daily stand-up for 15 minutes.

Ian shared lots of details and answered a lot of my annoying questions too. He came up with a great tree graphic to illustrate important factors in having a high performance project:

Again, find and download the slides once Oracle uploads them. In the meantime, follow Ian on twitter (@iabramson). A data-centric agilest is hard to find. For more on agile and data warehousing check out my classic white paper on the subject.

After Ian’s session I got to go to my first Oracle blogger meet up. It was nice to put more faces to names. Thanks to Pythian and OTN for sponsoring it.

Then back to the hotel to pack and then stand inline (for an hour!) to get to the appreciation event and see Pearl Jam live. It was a good concert. Hard to beat live music outdoors!

Well that’s it for me on OOW2012. I am back home in Houston now and heading into the office tomorrow. Then I need to write another abstract or two for KScope13 and RMOUG TD2013. Then it will be time to plan for OOW2013 and The America’s Cup finals…

Nap time.

Kent

You must be logged in to post a comment.