Oracle OpenWorld 2012: User Group Sunday

Yes, today was the first day for #OOW 2012. Affectionately known to many of us as User Group Sunday. Along with a ton of other activities, this is the day the various Oracle user groups get to “own” the agenda and put together the sessions they think Oracle customers, and their members, might want to see.

By users; for users.

For the 2nd year, ODTUG asked me to curate their agenda. I was fortunate enough to “recruit” some great track leads who invited and vetted speakers and sessions to fill five rooms for most of the day. It was quite successful. (Thanks for the hard work guys.)

I attended quite a few myself and captured a few photos and thoughts. I was tweeting all day so you can also go to Twitter and search on @Kentgraziano to see my twitter stream.

After checking in at the User Group kiosk, I went to my first session given by Gwen Shapira and Robyn Sands who spoke about Flexible Design and Data Modeling. Great topic. They gave some very practical advice on do’s and don’t if you want to be more agile.

“Just good enough” does not scale.

If you want some more modeling best practices, check out my ebook on Amazon: http://www.amazon.com/Check-Doing-Design-Reviews-ebook/dp/B008RG9L5E/.

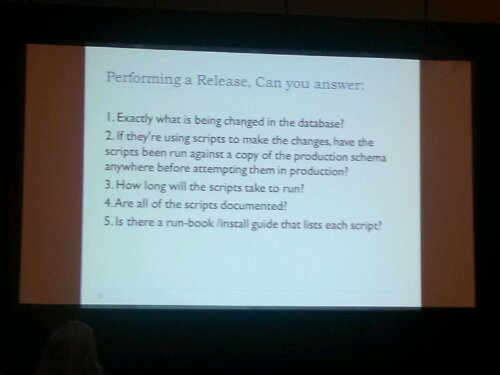

Next I went on to see Kellyn Pot’vin and Stewart Bryson do a DBA vs Developers show down with No Surprises Development.

Best advice – practice your deployments several times before going live…

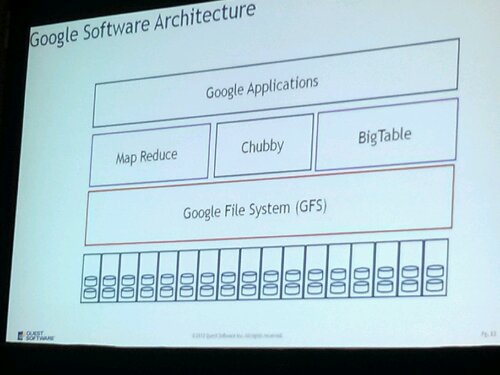

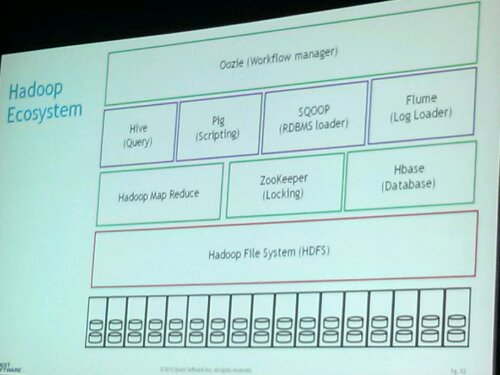

Next: Guy Harrison talked about Hadoop, Bug Data, and Exadata. This was a very helpful intro talk about the space. I have been trying to wrap my mind around Hadoop, NoSQL, unstructured data, etc. and how we deal with it. Lots of great diagrams and examples to help explain.

Sigh…more to learn.

Next was a very interesting session by Mark Rittman about the Oracle Endeca software and how it can be used in a BI environment and how it compliments OBIEE.

This gives a quick view of what is involved with the Oracle Endeca Platform.

It looks like a very interesting platform that uses key value pairs to store the data. This enables search and analytics on some realtively unstructured data stores (i.e., not relational tables)

Final talk of the day (for me) was Jon Mead telling us about how they helped a customer develop event driven analytics using ODI and OBIEE and the Oracle Reference Architecture for data warehousing.

After all this, a little break and networking, then on to the opening keynote.

It started with the Corporate Sr VP of Fujitsu who talked about some cloud applications they have deployed in Japan. They have the Agricultural Cloud project to help farmers be more efficient and bring more and better crops to market. They also have developed a Healthcare Cloud Service for optimizing patient care and early diagnosis.

Very cool cloud applications.

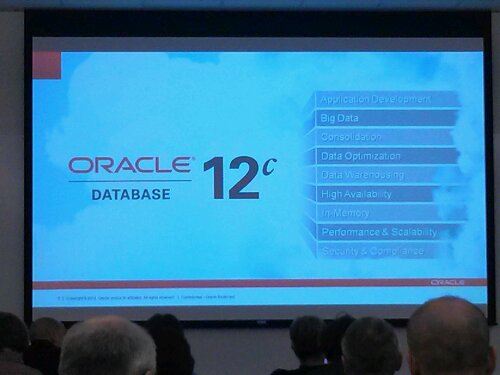

Last up was CEO, Lary Ellison who announced Oracle 12c and Pluggable Databases (to support cloud deployments). I had heard about these (under NDA) at the Ace Directors meeting so now I can share a few pictures related to those since it is now public information.

Bigger, badder, faster…

With PDB, you can develop a plug and play database. Many cool applications for this one.

To end out the day, I went to the 9th annual Oracle ACE dinner hosted by Oracle at the St Francis Yacht Club. Great food, drinks, and networking was had by all. Then back to the hotel to write this blog post.

Now off to bed so I can swim the bay with some other crazy people tomorrow morning. Wish me luck. Brrr.

Later.

Kent

You must be logged in to post a comment.