Maintaining disabled FK’s, wisdom or farce?

A while back, I wrote a post about having FKs (foreign keys) in your data warehouse.

Well, a similar question came up recently on an Oracle forum with the above title. It is a fair question and it does surface fairly regularly in a variety of contexts (not just data warehousing).

Of course, as The Data Warrior, I felt is was my duty to respond.

The Question

Is there any reason to maintain a permanently disabled FK in the data model? I’m not envisioning a reason to do it. If it is not going to be enabled, then from my perspective, it would not make any sense to have it defined. If anything, provide the definition of the relationship in the comment of the child column.

My Answer

Yes, by all means keep the FK please!

I see three good reasons for doing so:

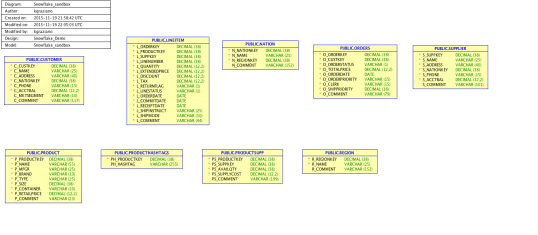

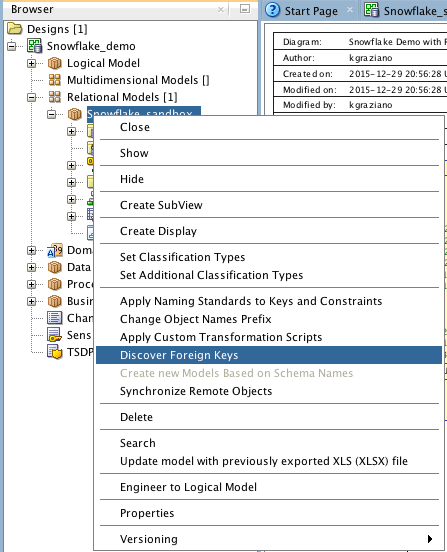

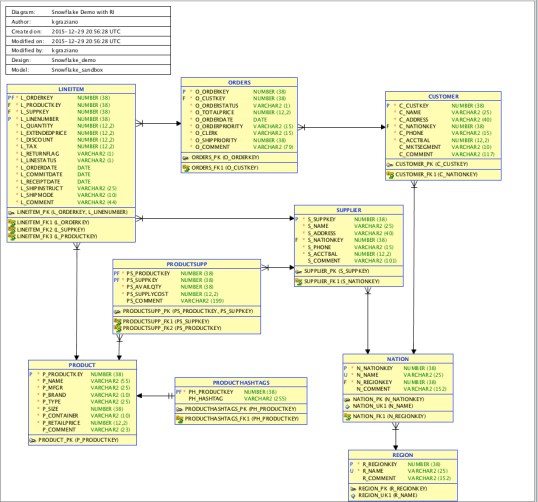

- It is valuable metadata (& documentation). If somebody reverse engineers the database (say with ERWin or Oracle Data Modeler), the FK shows up in the diagram (way better than having to read a column comment to find out)

A picture is worth a thousand words!

.

- BI Metadata – If you want to use any sort of reporting or BI tool against the database, most tools will import the FK definition with the tables and build the proper join conditions. Way better than having someone guess what the join will be and then manually adding it to the metadata layer in the reporting tool. Examples that can read the Oracle data dictionary include OBIEE, Business Objects, COGNOS, Looker, and many others.(Note here that since the FK is not enforced on the remote databases, you might want to make sure these are treated as outer joins, lest you lose some transaction in the reports).

- The Oracle optimizer will use disabled constraints to improve query performance of joins. Again, this is metadata in the data dictionary which the optimizer can read. This is documented in the Oracle Data Warehouse guide and I have validated it on multiple occasions with Oracle product management.

While #3 applies specifically to Oracle, for other databases like MS SQL Server and Snowflake, #1 and #2 still apply.

Even if only one of the above is true for a given database, that, in my opinion, still justifies keeping the disabled constraint around.

Final Answer = Wisdom

What do you think? Feel free to comment below.

And please share on your favorite social media platform!

Model on!

Kent

The Data Warrior

You must be logged in to post a comment.